Entrepreneurial Geekiness

Leadership discussion session at PyDataLondon 2024

At this year’s PyDataLondon 2024 conference I ran my regular Leadership discussion for team leaders & department heads. It is an open session for 40-60 senior people, under Chatham House rules, to discuss issues and seek feedback. We had a lot of happy people in the room for this session (photo below – taken with the understanding that this would be made public). This is the 8th and a deeper session I ran at PyDataGlobal 2022 was written up.

Ian Ozsvald always does a great job running the leader’s session (see his write-up in the comments). I came away with a bunch of new contacts and some useful highlights. Have you noticed how your own mood and stress can impact your team – maybe you need a holiday? Legacy code is “code that makes money”, and shouldn’t be thought of so negatively. And Laszlo Sragner on understanding motivation with self-determination theory “for an individual to be continuously motivated in their role, three factors need to be present: autonomy, mastery and relatedness.” (see his post in the comments) – via Ryan on LI

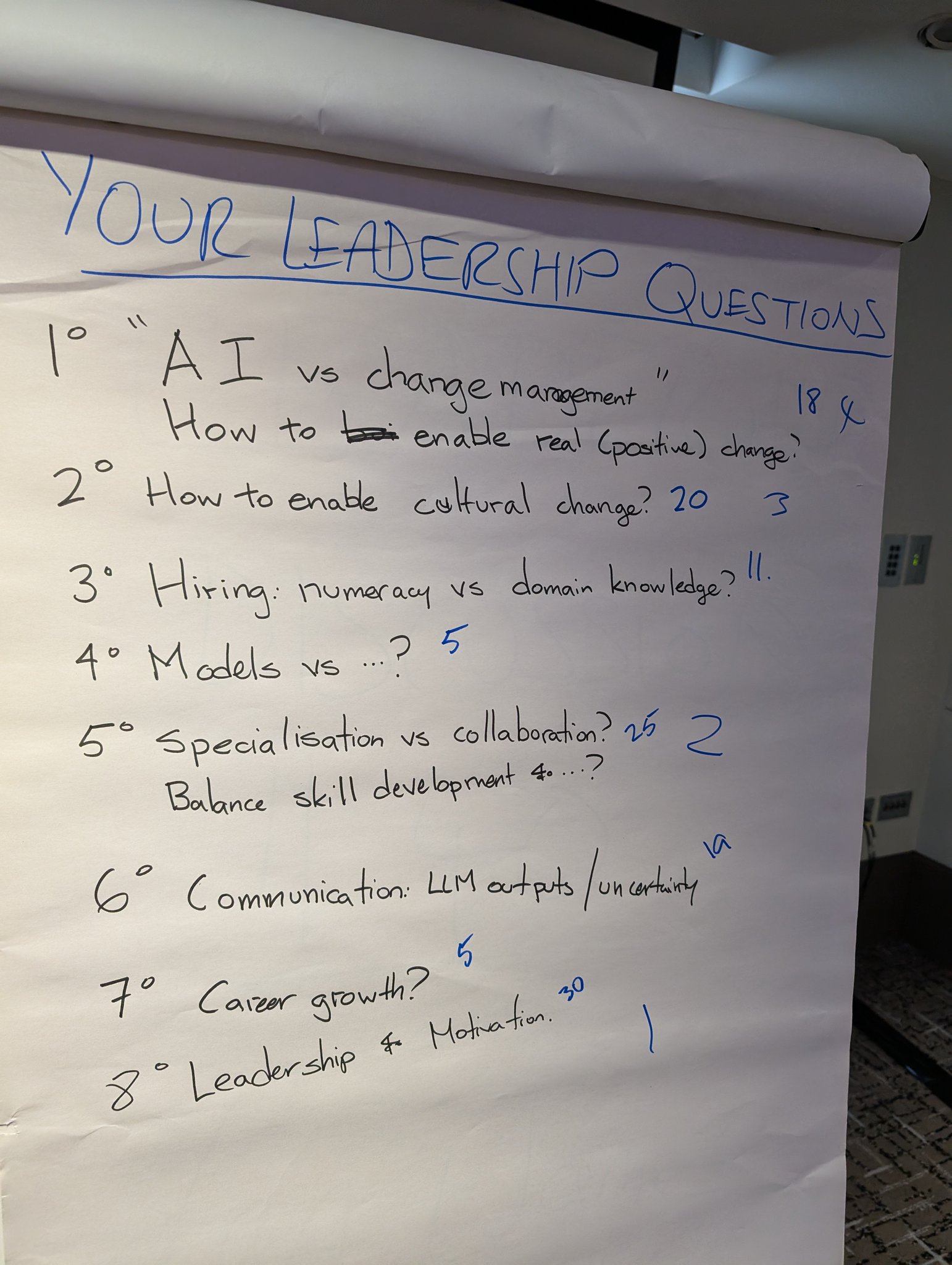

In this session we talked about demotivated team members, collaboration vs specialisation and enabling change in resistant organisations. I start the sessions by gathering feedback from attendees which is shown below. We got 8 and voted these to a top 3.

Leadership vs demotivation

What do you do if a team member is given a project and they accept it, but they don’t want it?

- Poor motivation might mean they’re on a “low energy, low skill” project given their interests – maybe that can be paired with a second more-fun project

- Ryan wrote this up and expanded it on LI (and you should go read it!)

- Working collaboratively could be more fun than solo projects

- Self determination theory talks on autonomy, mastery and purpose – perhaps they’ve hit a point of frustration that needs to be identified?

- Further detail from Laszlo on You Only Need These 3 Data Roles in a Data Driven Enterprise

- Ask them “how can we make this more interesting for you?”

- Alienation theory was briefly noted

- Some might think “legacy is boring” but “legacy is the stuff that makes money” – help the person see why their project is valuable in the org and how this could help them e.g. with visibility, winning points, promotion prospects etc

- Successful teams should never burn out, so what’s blocking success for this person?

- A lack of motivation might mean they don’t understand the value of the project?

- Founder grumpiness can infect the team – sometimes the wider team need to do an intervention to send you on holiday so after you can lead with confidence

Specialisation vs collaboration

Once you’ve built a team you win more by collaborating further afield, not just be specialising in the team – how to encourage this?

- Think on circles of impact – things you’re supposed to do around you, your team, the department and the company – how can you multiply your impact?

- A large org uses a structure that looks to career growth, business impact and skill-driven growth – sometimes people have to focus on business impact, other times they can focus on skill-driven (“CV driven”) development – this is acknowledged and balanced out

- Juniors need to focus on career development and maybe they are motivated personally to gain skills – you have to help them manage their expectations on business need vs personal need

- In another large org they’re experimenting with 3-person self-directed teams who self-organise (under a “better to ask forgiveness than permission” experiment), they figure out the best way to engage with the wider business

- The idea of moving to a Data Mesh Architecture encompassing principles around domain ownership, data as a product, self serve data infrastructure platform and federated governance to enable teams to engage without being silos in a growing organisation

During the session I also handed out snake-stickers so folk could self-identify in the following lunch and afternoon sessions, I’m told this worked well and helped folk find other leaders to continue the conversation:

Enabling change

How do you deal with managers and leaders who resist change with arguments like “I can do it with intuition well enough already” (when it is clear that valuable improvements can be made beyond this).

- Avoid any big-change projects that’ll surprise the end users at the “successful end” of the development phase – instead get several end users involved early so that confidence builds and positive change occurs from early on

- What numbers do they use already to drive decision? What causes them to get buy in? Could you couple your ideas with the results they’re seeking?

- With a “conservative” team you might take them slowly on a journey from simple automation and reporting to increasing complexity, reducing fear and increasing the size of outcomes

- Can you find ambassadors who help to bridge the gaps of fear and mis-understanding, then give them examples of what’s worked elsewhere in the organisation to build confidence?

- People can be afraid of new technology and process change so support anything that derisks the new approach and solves whatever causes the resistance

During the session I also talked about a practice I’ve developed called a “crit” (constructive critique) which I use in my private RebelAI leadership group. A member gets to present a problem and seek feedback from 12+ people on the call. They get 5 minutes to share some slides setting the scene (context, what they need, what worked and failed, what they’re after from the feedback) and then 40 minutes of feedback is given.

I suggested that this be taken back as a kind of Mastermind Group , you’d get 5+ people in your org, agree to meet monthly, then take turns sharing opportunities and gaining feedback in a trusted group. We go further inside RebelAI including an active Slack group, but to build a useful, trusted and supportive group the regular meeting and “crits” would be a great starting point.

If you are in a data science leadership role, you’ve got opportunity and challenge ahead and you’d like either some advice, or perhaps to be a part of my private data science leadership group (RebelAI), contact me through LinkedIn.

Ian is a Chief Interim Data Scientist via his Mor Consulting. Sign-up for Data Science tutorials in London and to hear about his data science thoughts and jobs. He lives in London, is walked by his high energy Springer Spaniel and is a consumer of fine coffees.

What I’ve been up to since 2022

This has been terribly quiet since July 2022, oops. It turns out that having an infant totally sucks your time! In the meantime I’ve continued to build up:

- Training courses – I’ve just listed my new Fast Pandas course plus the existing Successful Data Science Projects and Software Engineering for Data Scientists with runs of all three for July and September

- My NotANumber newsletter, it goes out every month or so, carries Python data science jobs and talks on my strategic work, RebelAI leadership community and Higher Performance Python book updates

- RebelAI – my private data science leadership community (there’s no web presence, just get in touch) for “excellent data scientists turned leaders” – this is having a set of very nice impacts for members

- High Performance Python O’Reilly book – we’re working on the 3rd edition

- PyDataLondon 2024 has a great schedule and if you’re coming – do find me and say hi!

Ian is a Chief Interim Data Scientist via his Mor Consulting. Sign-up for Data Science tutorials in London and to hear about his data science thoughts and jobs. He lives in London, is walked by his high energy Springer Spaniel and is a consumer of fine coffees.

Upcoming discussion calls for Team Structure and Buidling a Backlog for data science leads

I ran another Executives at PyData discussion session for 50+ leaders at our PyDataLondon conference a couple of weeks back. We had great conversation which dug into a lot of topics. I’ve written up notes on my NotANumber newsletter. If you’re a leader of DS and Data Eng teams, you probably want to review those notes.

To follow on the conversations I’m going to run the following two (free) Zoom based discussion sessions. I’ll be recording the calls and adding notes to future newsletters. If you’d like to join, fill in this invite form and I can add you to the calendar invite. You can lurk and listen or – better – join in with questions.

Monday July 11, 4pm (UK time), Data Science Team Structure – getting a good structure for your org, hybrid vs fully remote practices, processes that support your team, how to avoid being left out- Monday August 8th, 4pm (UK time), Backlog & Derisking & Estimation – how to build a backlog, derisking quickly and estimating the value behind your project

I’m expecting a healthy list of issues and good feedback and discussion for both calls. I’ll be sharing an agenda in advance to those who have contacted me. My goal is to turn these into bigger events in the future.

Ian is a Chief Interim Data Scientist via his Mor Consulting. Sign-up for Data Science tutorials in London and to hear about his data science thoughts and jobs. He lives in London, is walked by his high energy Springer Spaniel and is a consumer of fine coffees.

My first commit to Pandas

I’ve used the Pandas data science toolkit for over a decade and I’ve filed a couple of issues, but I’ve never contributed to the source. At the weekend I got to balance the books a little by making my first commit. With this pull request I fixed the recent request to update the pct_change docs to make the final example more readable.

The change was trivial – adding “periods=-1” to the argument and updating the docstring. The build process was a lot more involved – thankfully I was on a call with PyLadies London to try to help others make their first contribution to Pandas and I had organiser Marco Gorelli (a core contributor) to help when needed.

Ultimately it boiled down to setting up a docker environment, running a new example in my shell, updating the relevant docstring on the local filesystem and then following the “contributing to the documentation” guide. My initial commit fell foul of the docstring style rules and the automated checking tools in the docker environment point this out. Once the local filesystem checker scripts were happy I pushed to my fork, created a PR and shortly after everything was done.

All in it took 45 minutes to get the environment setup, another 45 minutes to make my changes and figure out how to run the right scripts, then a bit longer to push and submit a PR (followed by overnight patience before it got picked up by the team).

When I teach my classes I always recommend that a good way to learn new development practices (like the automated use of black & flake8 in a precommit process) is to submit small fixes to open source projects – you learn so much along the way. I’ve not used docker in years and I don’t use automated docstring checking tools, so both presented nice little points for learning. I also have never used the pct_change function in Pandas…and now I have.

If you’ve not yet made a commit to an open source project, do have a think about it – you’ll get lots of hand holding (just be patient, positive and friendly when you leave comments) and you can stick a reference to the result on your CV for bragging rights. And you’ll have made the world a slightly better place.

Ian is a Chief Interim Data Scientist via his Mor Consulting. Sign-up for Data Science tutorials in London and to hear about his data science thoughts and jobs. He lives in London, is walked by his high energy Springer Spaniel and is a consumer of fine coffees.

Skinny Pandas Riding on a Rocket at PyDataGlobal 2020

On November 11th we saw the most ambitious ever PyData conference – PyData Global 2020 was a combination of world-wide PyData groups putting on a huge event to both build our international community and to leverage the on-line only conferences that we need to run during Covid 19.

The conference brought together almost 2,000 attendees from 65 countries with 165 speakers over 5 days on a 5-track schedule. All speaker videos had to be uploaded in advance so they could be checked and then provided ahead-of-time to attendees. You can see the full program here, the topic list was very solid since the selection committee had the best of the international community uploading their proposals.

The volunteer organising committee felt that giving attendees a chance to watch all the speakers at their leisure took away constraints of time zones – but we wanted to avoid the common end result of “watching a webinar” that has plagued many other conferences this year. Our solution included timed (and repeated) “watch parties” so you could gather to watch the video simultaneously with others, and then share discussion in chat rooms. The volunteer organising committee also worked hard to build a “virtual 2D world” with Gather.town – you walk around a virtual conference space (including the speakers’ rooms, an expo hall, parks, a bar, a helpdesk and more). Volunteer Jesper Dramsch made a very cool virtual tour of “how you can attend PyData Global” which has a great demo of how Gather works – it is worth a quick watch. Other conferences should take note.

Through Gather you could “attend” the keynote and speaker rooms during a watch-party and actually see other attendees around you, you could talk to them and you could watch the video being played. You genuinely got a sense that you were attending an event with others, that’s the first time I’ve really felt that in 2020 and I’ve presented at 7 events this year prior to PyDataGlobal (and frankly some of those other events felt pretty lonely – presenting to a blank screen and getting no feedback…that’s not very fulfilling!).

I spoke on “Skinny Pandas Riding on a Rocket” – a culmination of ideas covered in earlier talks with a focus on getting more into Pandas so you don’t have to learn new technologies and see Vaex, Dask and SQLite in action if you do need to scale up your Pythonic data science.

I also organised another “Executives at PyData” session aimed at getting decision makers and team leaders into a (virtual) room for an hour to discuss pressing issues. Given 6 iterations of my “Successful Data Science Projects” training course in London over the last 1.5 years I know of many issues that repeatedly come up that plague decision makers on data science teams. We got to cover a set of issues and talk on solutions that are known to work. I have a fuller write-up to follow.

The conference also enabled a “pay what you can” model for those attending outside of a corporate ticket, this brought in a much wider audience that could normally attend a PyData conference. The goal of the non-profit NumFOCUS (who back the PyData global events) is to fund open source so the goal is always to raise more money and to provide a high quality educational and networking experience. For this on-line global event we figured it made sense to open out the community to even more folk – the “pay what you can” model is regarded as a success (this is the first time we’ve done it!) and has given us some interesting attendee insights to think on.

There are definitely some lessons to learn, notably the on-boarding process was complex (3 systems had to be activated) – the volunteer crew wrote very clear instructions but nonetheless it was a more involved process than we wanted. This will be improved in the future.

I extend my thanks to the wider volunteer organising committee and to NumFOCUS for making this happen!

Ian is a Chief Interim Data Scientist via his Mor Consulting. Sign-up for Data Science tutorials in London and to hear about his data science thoughts and jobs. He lives in London, is walked by his high energy Springer Spaniel and is a consumer of fine coffees.

Read my book

Oreilly High Performance Python by Micha Gorelick & Ian Ozsvald AI Consulting

Co-organiser

Trending Now

1Leadership discussion session at PyDataLondon 2024Data science, pydata, RebelAI2What I’ve been up to since 2022pydata, Python3Upcoming discussion calls for Team Structure and Buidling a Backlog for data science leadsData science, pydata, Python4My first commit to PandasPython5Skinny Pandas Riding on a Rocket at PyDataGlobal 2020Data science, pydata, PythonTags

Aim Api Artificial Intelligence Blog Brighton Conferences Cookbook Demo Ebook Email Emily Face Detection Few Days Google High Performance Iphone Kyran Laptop Linux London Lt Map Natural Language Processing Nbsp Nltk Numpy Optical Character Recognition Pycon Python Python Mailing Python Tutorial Robots Running Santiago Seb Skiff Slides Startups Tweet Tweets Twitter Ubuntu Ups Vimeo Wikipedia